PicoLM

PicoLM is a minimal, zero-dependency LLM inference engine written in C11 designed to run 1-billion parameter models on resource-constrained hardware. It features a tiny binary footprint of approximately 80KB and a runtime memory requirement of just 45MB, enabling local AI on devices like the Raspberry Pi Zero and LicheeRV Nano. The engine supports GGUF format models and utilizes memory-mapping and SIMD acceleration for efficient performance without internet connectivity.

PicoLM is an ultra-lightweight, from-scratch inference engine that enables running 1-billion parameter Large Language Models (LLMs) on hardware as affordable as a $10 board. Built in pure C11 with zero external dependencies, it provides a fully offline “local brain” for AI applications, requiring no cloud access, no API keys, and no Python environment.

The Perfect Match: PicoLM + PicoClaw

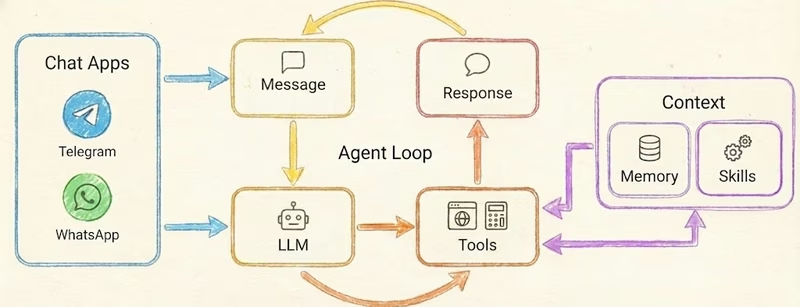

PicoLM was originally developed to serve as the local inference backend for PicoClaw, an AI assistant designed for low-cost hardware. In this architecture, PicoClaw handles the agent loop and communication interfaces (like Telegram or Discord), while spawning PicoLM as a subprocess to process prompts. This combination allows for a completely private AI agent that functions without an internet connection, processing messages and generating responses locally.

For tasks requiring structured data, PicoLM includes a specialized grammar mode. This ensures that even small 1B parameter models generate syntactically valid JSON, which is essential for reliable tool calling and integration into automated workflows.

Core Capabilities and Architecture

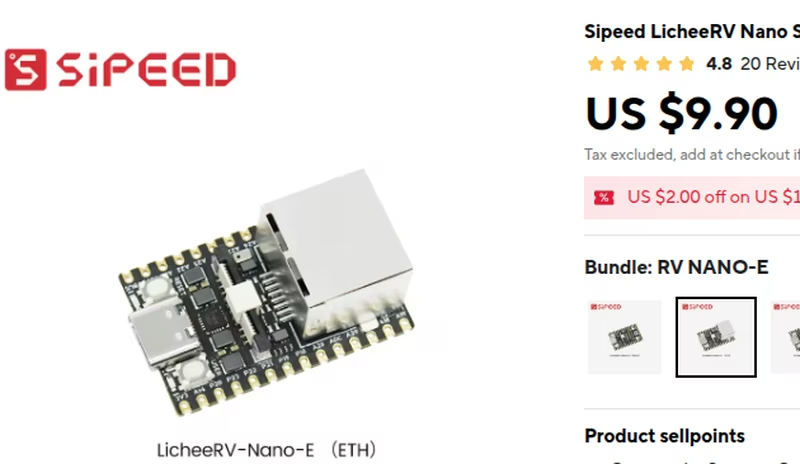

The engine is a minimal implementation of approximately 2,500 lines of code. It is designed to run TinyLlama 1.1B and other LLaMA-architecture models on hardware that typical inference frameworks cannot support, such as the Raspberry Pi Zero 2W or the RISC-V based Sipeed LicheeRV.

One of the most significant architectural features is the use of memory-mapping (mmap). Instead of loading a massive model file entirely into RAM, PicoLM maps the file and streams individual layers through memory as needed. This allows a 638MB model to operate on a device with only 256MB of RAM, with a total runtime memory footprint of about 45MB including the KV cache.

Key Technical Features

- GGUF Native Support: Reads GGUF v2/v3 files directly with support for various K-Quants (Q2_K through Q8_0).

- SIMD Acceleration: Auto-detects and utilizes ARM NEON and x86 SSE2 instructions for high-performance vector operations.

- Flash Attention: Implements online softmax to eliminate the need for large attention buffers, critical for memory-constrained devices.

- Optimized Math: Uses pre-computed RoPE (Rotary Position Embedding) tables and fused dequantization/dot-product kernels to reduce CPU overhead and memory traffic.

- Zero Dependencies: Relies only on standard system libraries (

libc,libm,libpthread), making it highly portable across Linux, Windows, and macOS.

Performance and Efficiency

PicoLM is optimized to extract maximum performance from modest CPUs. On a Raspberry Pi 4, it can achieve generation speeds of approximately 8 tokens per second. On even more constrained hardware like the Pi Zero 2W, it maintains a usable 2 tokens per second.

The engine’s efficiency is further enhanced by KV cache persistence. By saving the prompt state to a file, the system can skip the prefill stage on subsequent runs with the same prompt, resulting in a significant reduction in latency for repeated system instructions. This makes it an ideal solution for embedded systems where power and compute resources are at a premium.